Self-Directed AI: Ethics in Predictive Learning

The field of AI is riddled with ethical quandaries. As we develop our machines to act with more free agency, how then do we ensure they act ethically?

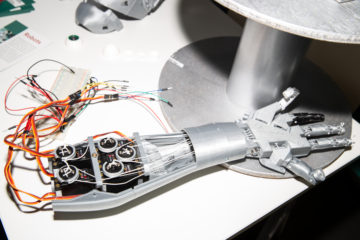

Self-directed artificial intelligence, or predictive learning, is a tantalizing prospect that stands to revolutionize the entire machine learning industry. With it comes a degree of autonomy not demonstrated in current supervised learning algorithms, such as the ones used in self-driving cars.

Right now, we must teach our machines the difference between right and wrong. However, if we are to move toward more self-directed algorithms, we must create an ethical model that can be replicated without human input. A moral code for our algorithms.

There are already active steps being taken to canonize our collective values as a society. MIT’s Moral Machine is a prime example. It asks humans to assess several emergency scenarios for an autonomous vehicle and records their responses. For example, should a car prioritize the life of a single driver or the lives of a group of pedestrians? What if those pedestrians are all criminals and the driver is a doctor?

While the scenarios only pertain to self-driving cars, the website attempts to define our collective morality—do certain lives hold more value over others?

Although posing ethical questions as MIT does will become increasingly important as we delve further into automation and artificial intelligence, they present a binary view of morality that somewhat misses the point of defining a universal code of ethics. Choosing scenario A over scenario B isn’t a realistic assessment of human morals because in a real life situation, the choices of action can be near infinite.

The ideology and methodology behind our current approach toward machine morality brings to mind Spock’s famous quote: “The needs of the many outweigh the needs of the few.”

Although they sound profound, and make for memorable television, the vulcan’s words are actually a perversion of utilitarianism. A more accurate summation of that philosophy can be parsed from John Stuart Mill, a highly influential English philosopher and economist:

“Actions are right in proportion as they tend to promote happiness; wrong as they tend to produce the reverse of happiness. By happiness is intended pleasure and the absence of pain.”

Our machines need to be able to consider the results of their actions, as well as the long term implications of those actions. Should the car avoid a group of pedestrians if the driver could potentially cure cancer? Does a banker’s life take priority over that of a criminal?

This is rooted into our ability to define our own morals. Reams of philosophical writings exist trying to define right and wrong, good and evil, but no definitive consensus exists. As human beings, we generally have a sense of what’s depraved and what is acceptable. For example, murdering people without motive is usually seen as wrong. However, even in these cases there are always exceptions: In war and self-defense killing is seen as necessary, and even acceptable. Most attempts to define actions as right or wrong will be met with exceptions. The question then becomes how do we define and categorize these exceptions.

Off the cuff, I’d say that morals can be classified in orders of magnitude. First you have basic human rights, which tend to be universally agreed upon across cultures. These would be concepts like killing without motive is immoral and people deserve access to food and shelter.

On the highest levels, these can be seen as universal truths. However, when you start moving into the practical implementation of these ideologies, trick questions with no clear answer begin to arise.

If providing one person access to housing meant depriving another of food, which would take priority? Or perhaps you could offer 10 people permanent housing at the expense of 1 person’s life—would that be an acceptable trade-off? A utilitarian might think this to be acceptable if it resulted in a net increase in happiness. And in a more tangible dilemma, if the city budget had only enough provisions for food stamps or housing initiatives, which should be funded?

These are questions that have no clear answer, and the solutions tend to rely on the loudest voices in the room. Sometimes the more efficient solution is to prioritize the needs of the few due to the larger societal implications. There is no concrete way to model these types of decisions as each situation must be assessed organically, while taking into account public sentiment and human emotion.

Perhaps this is why predictive learning hasn’t gained more traction. If we cannot define our own collective morals, how then can we imprint them upon the machines we create?